How Shrinking Pixels Push Optics to the Limit — and How Neural Restoration Pushes Back

By: Deep Optics Team at Glass Imaging

Jingxi Li, Neerja Aggarwal, Tom Bishop, Ziv Attar

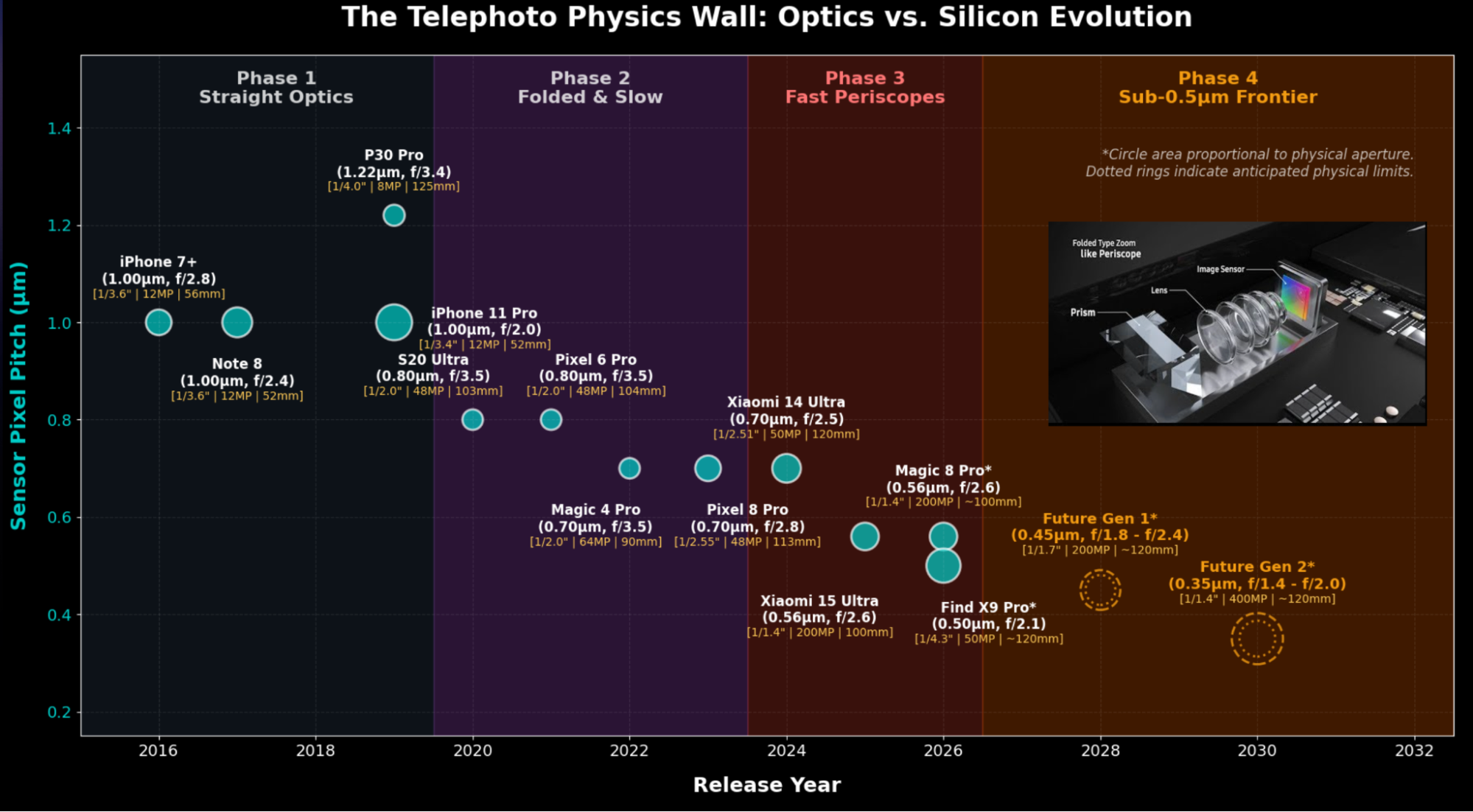

The Telephoto Physics Wall

Smartphone telephoto cameras have undergone a dramatic transformation over the past decade (see Figure 1). Pixel pitch has shrunk from 1.0 µm in the iPhone 7+ era down to around 0.5 µm in today’s 200MP periscope modules — with sub-0.5 µm designs already on the horizon. Each generation packs more resolving power into the same physical sensor area.

But as pixels shrink, the optics must also keep up. The lens must work harder to create a smaller point spread function, i.e. the image of a single point from the scene. To avoid diffraction blur dominating at smaller pixel sizes, camera designers lower the F-number — opening the aperture wider to keep the diffraction spot small compared to the pixel pitch. This leads to faster lenses (i.e. wider lens with larger aperture that allows for higher light collection and typically faster shutter speeds). The recent Oppo Find X9 Pro (0.5 µm, F/2.1) has a significantly faster lens than the Honor Magic 8 Pro (0.56 µm, F/2.6), and this trend will only accelerate.

There is a trade-off with opening the aperture, however. A wider aperture means the lens captures rays from steeper angles at the edge of the pupil. These marginal rays contribute more to geometric aberrations — spherical aberration, coma, astigmatism, and higher-order terms that even the best lens designs struggle to eliminate. Thus while diffraction spot size is reduced, aberration based blur increases with these wider apertures. We’re approaching what we call the “telephoto physics wall”: the point where silicon scaling outpaces what conventional optics and traditional ISP can cleanly deliver, and further resolution gain is limited.

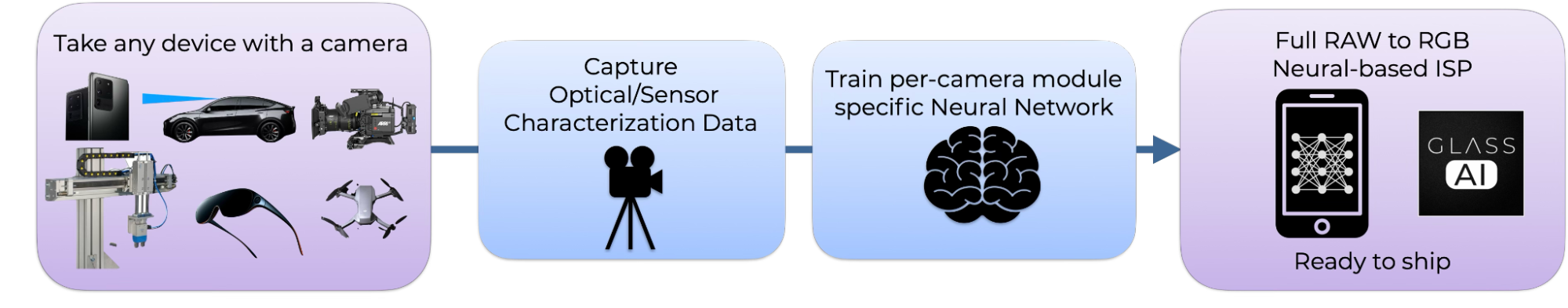

Over the past few years, Glass Imaging has developed a Neural-based ISP, called Glass AI, that could help overcome this issue. As shown in Figure 2, we train a camera-specific neural network to undo the optical aberrations and sensor effects that can run in real-time on edge devices. We have tested our approach against existing state of the art smartphone ISPs and clearly outperformed them (see our comparison on the iPhone 16 + Glass AI vs iPhone 17).

This raises a fundamental question: as pixels shrink, apertures widen, and aberrations grow, how well can a Neural ISP enable recovery of lost detail? Can Neural ISP break the limits faced by traditional ISP and show that decreasing pixel sizes below 0.5µm and sensors beyond 200MP can still provide a meaningful resolution benefit

To answer this, we designed a controlled simulation study that isolates these effects. We simulated image formation through a set of representative smartphone lens modules with increasing aperture, and corresponding CMOS image sensors with decreasing pixel size.

Our study demonstrates the necessity of using Neural ISPs to deblur severely aberrated PSFs as pixel size shrinks to enable the next generation of high resolution telephoto cameras. By comparison, when only using traditional ISP without lens-specific deconvolution, we see that the telephoto physics wall comes into play, with limited benefit to using smaller pixels.

Experiment Design: Scaling Pixel Size and Aperture Together

Simulating images from a set of cameras with realistic optical blur

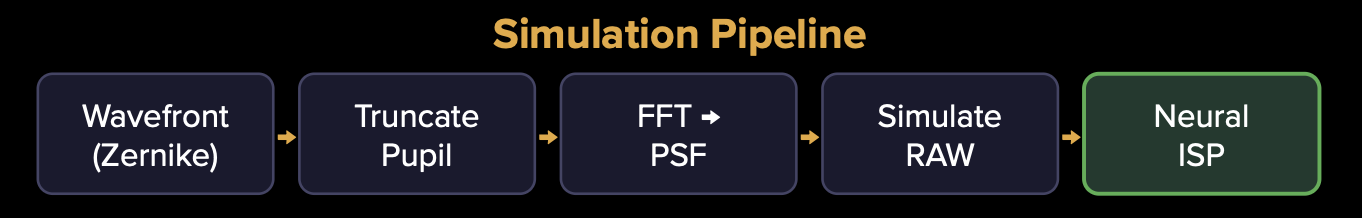

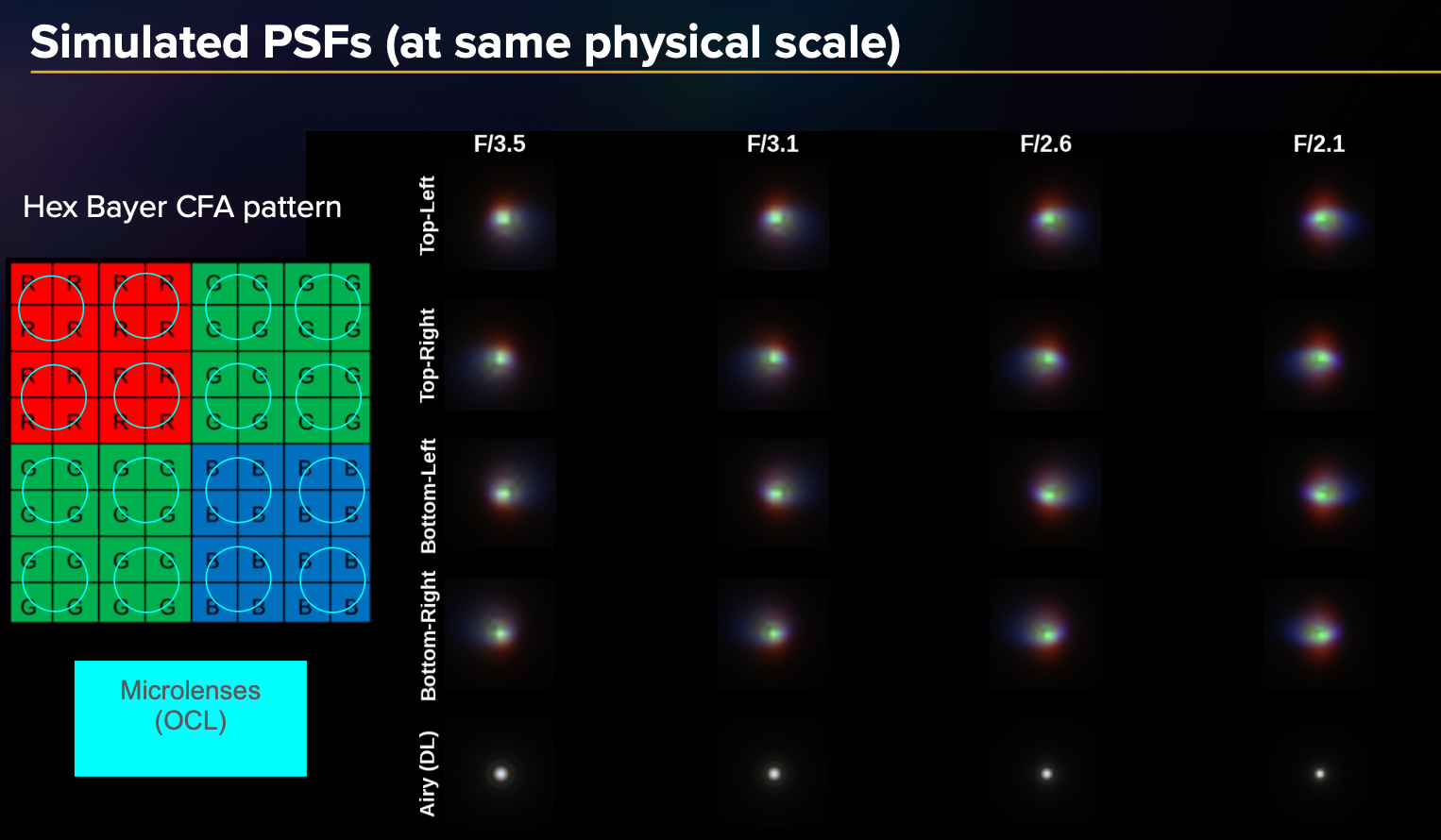

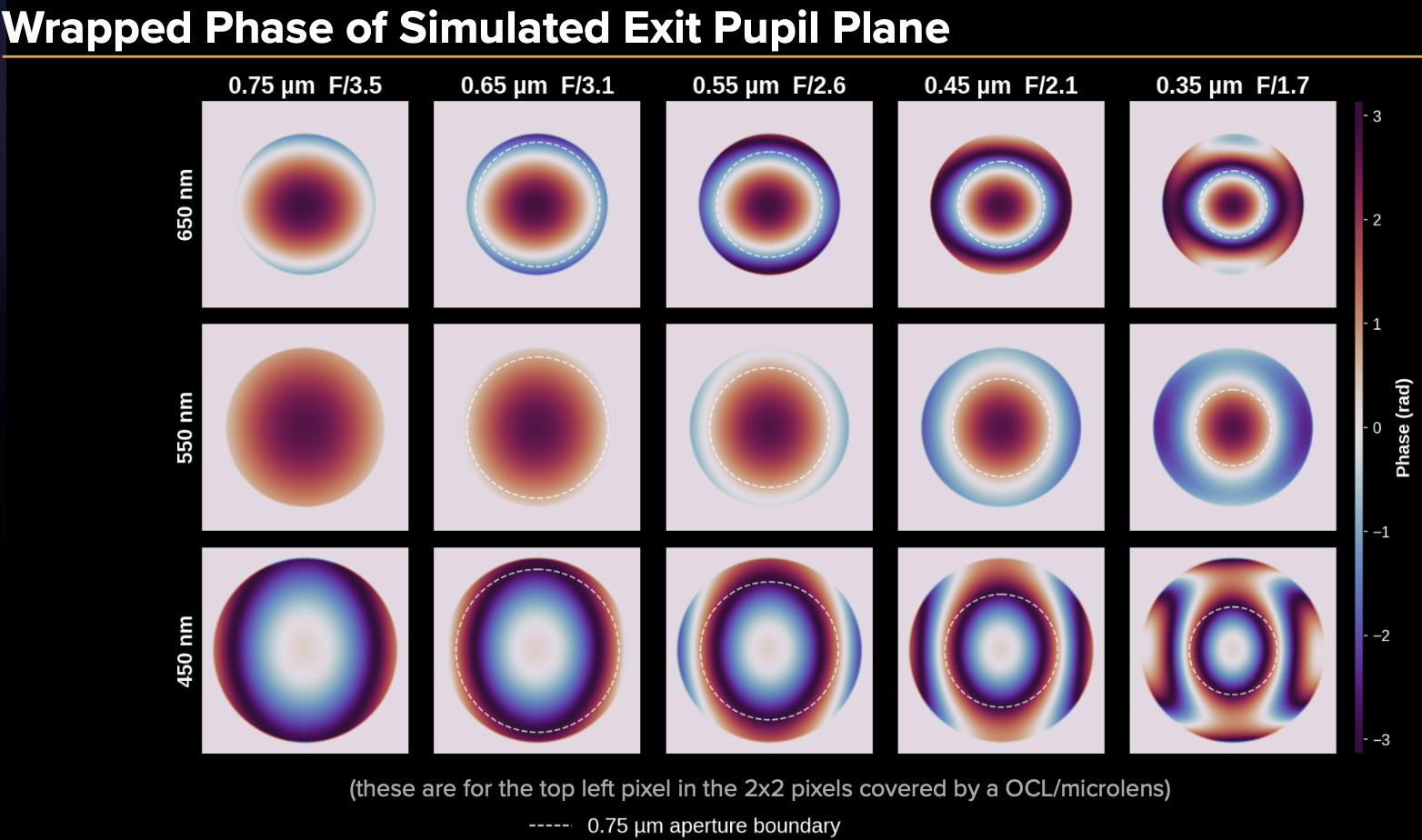

The simulation pipeline (shown in Figure 3) begins with modeling the optics and sensor behavior to create realistic RAW images. We start with a representative Zernike wavefront for a typical smartphone lens module. The pupil (i.e. aperture) is truncated based on the selected F/# for that camera configuration. Then we take a Fourier Transform of the pupil function to propagate to the image sensor plane. We also incorporate the sensor's on-chip lens (OCL) effects to achieve the final PSFs shown in Figure 4. We synthesize the final RAW image with Hex Bayer mosaicking (as found on many current 200MP sensors), and model sensor noise. We also account for simulation aspects including SNR normalization. For further experimental details, please see the appendix at the end of this article.

Traditional ISP and Neural ISP image restoration methodology

We fed the RAW image into a representative “traditional ISP” for comparison. It performs bilinear demosaicing, followed by a standard forward ISP color/tone chain (white balance, color correction, tone mapping, and gamma). Sensor noise is suppressed using a pyramid bilateral filter operating in YCbCr space, with conservative luma filtering to preserve detail and more aggressive chroma filtering to suppress the color noise introduced by demosaicing. A light unsharp mask processing is applied last to recover edge sharpness. This is representative of a conventional hardware ISP without any learned or optics-aware processing.

We also feed the raw image into our Neural ISP which replaces the majority of the traditional imaging pipeline — from RAW denoising, CFA interpolation (demosaicing), multi-frame alignment and fusion, through to noise filtering, sharpening, and detail enhancement — with a single learned neural network tailored to each camera, operating end-to-end on the RAW sensor data. The network used for these experiments is a simple CNN trained per camera module that we simulated, using paired RAW and ground truth data (typically obtained from real cameras through our automated optical and sensor characterization process, but in this case using our simulation data). Because it is trained on data that captures the specific PSF, noise characteristics, and CFA layout of the target sensor and lens combination, the network implicitly learns to invert these degradations jointly rather than treating them as independent processing stages. This is fundamentally different from a traditional ISP, where each stage operates without knowledge of the optical system that produced the input signal.

The result is a full RAW-to-RGB neural ISP that handles demosaicing, denoising, aberration correction, and detail recovery in a single forward pass. Standard post-processing steps such as color space conversion, tone mapping, and compression remain downstream and can be customized per application. Our Glass AI platform supports any CFA pattern (Bayer, Quad, Hex, RGBW, RGB-IR, and others) and any pixel size, with deployment optimized for on-device execution on mobile NPUs including Qualcomm Snapdragon HTP.

Simulation configurations

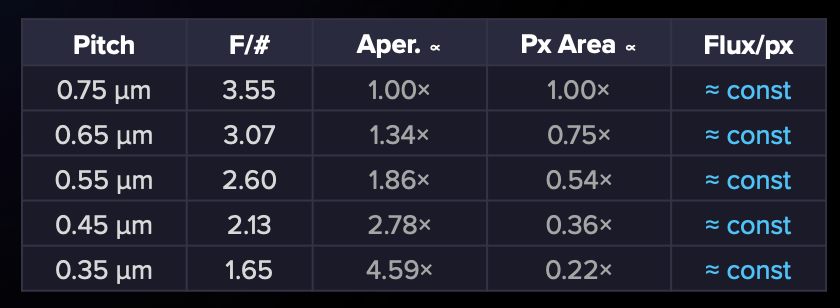

In the real world, as pixel pitch decreases, camera module designers typically reduce the F-number proportionally. We formalized this as our experimental control variable: F/# ÷ pitch = constant. This keeps the diffraction spot size fixed in pixel units across all configurations, so any changes we see in image quality are attributable to geometric aberrations and spatial sampling — not diffraction.

We simulated five configurations spanning a 2× range of pixel pitch from 0.35 to 0.75 micron as shown in Figure 5. For each configuration, the raws were synthesized using the forward model mentioned above and a neural network was trained on a set of >11,000 RAW and ground truth pairs and tested on unseen 150 evaluation images to calculate the metrics below.

Results: The Neural ISP Advantage Grows with Smaller Pixels

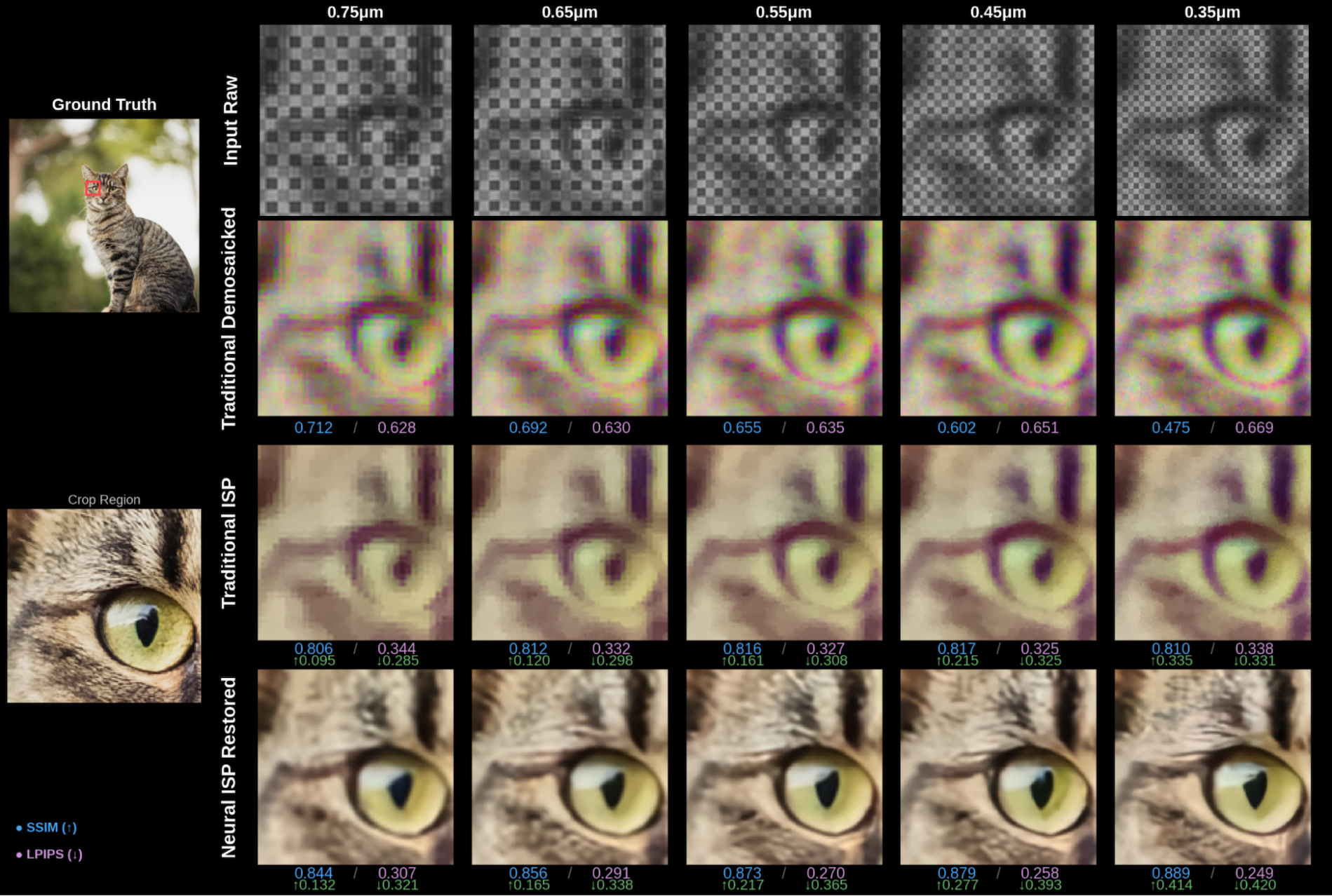

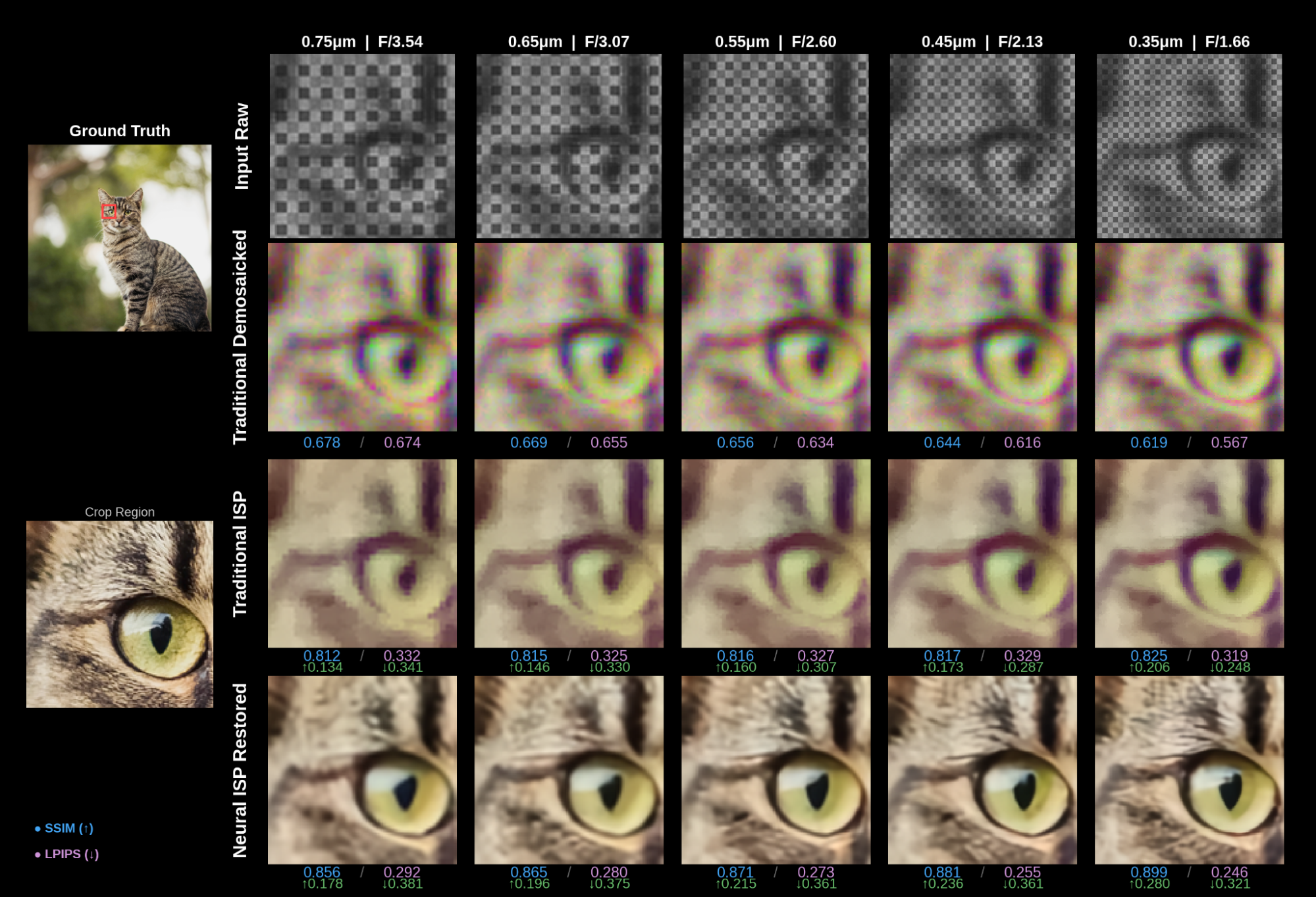

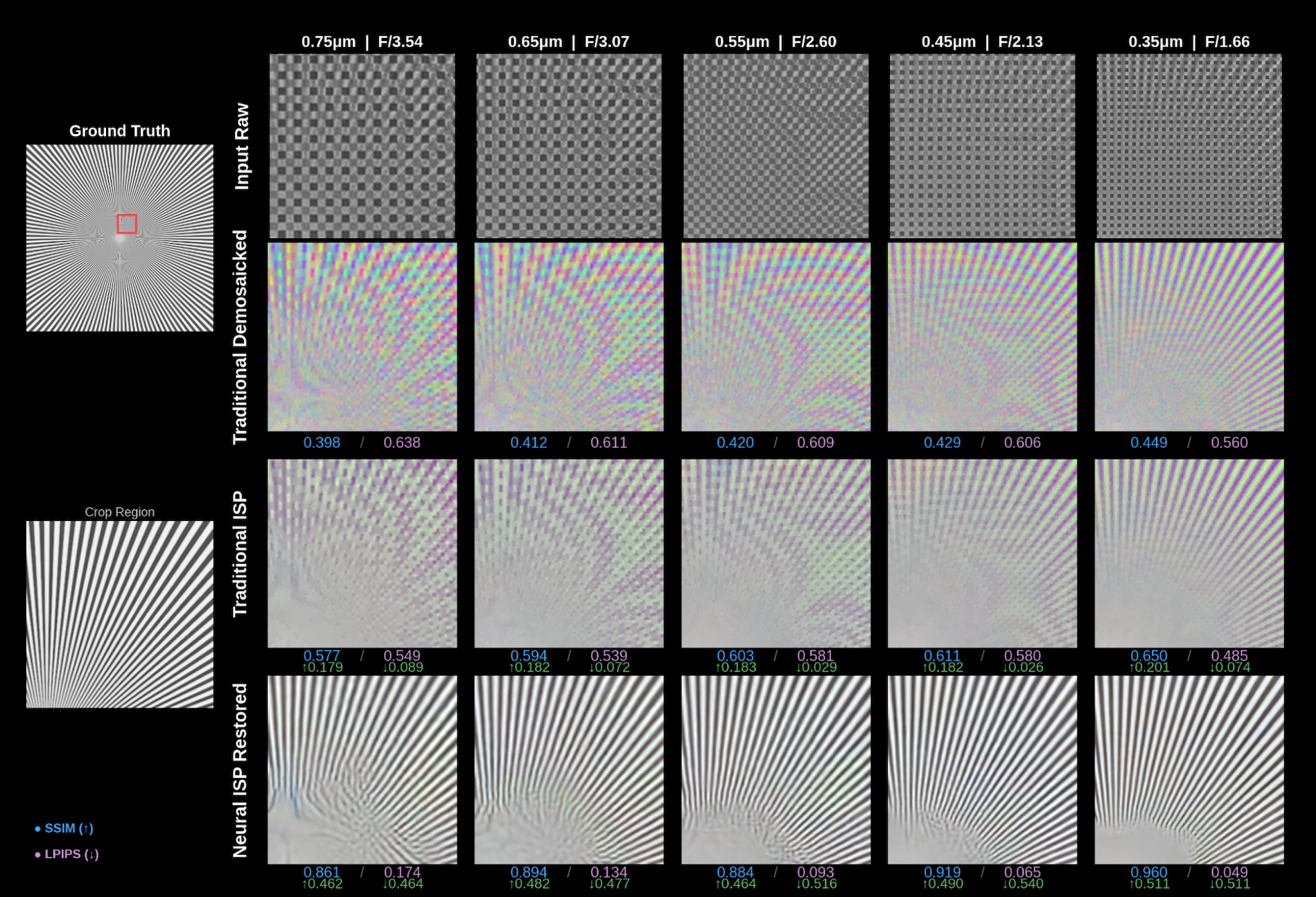

Visual comparison across pixel sizes shows Neural ISP recovers more detail

Our initial results demonstrate the importance of Neural ISPs for image restoration as we move to sensors with smaller pixels. We simulated several test images through our pipeline modeling the image formation and restoration process. For each test image, we show the input RAW (mosaicked), the traditional bilinear demosaic, the traditional ISP output, and the neural ISP restoration across all five pixel pitch / F-number configurations.

Natural Images: On a detailed crop from natural images, the traditional ISP output remains blurry and smoothed even as pixel density increases. This is because the PSF also spans more pixels and hence blurs the more densely sampled scene content. The neural ISP output, by contrast, improves in sharpness and detail as the pixel density increases because it is PSF aware and can undo this effect. The 0.35 µm | F/1.66 neural ISP result resolves finer scene detail than the 0.75 µm | F/3.54 result — despite the lens producing significantly worse aberrations at smaller F/#.

Text and fine detail: On a mixed-script text chart (Latin and CJK characters), the traditional ISP output is essentially unreadable across all configurations due to severe artifacts from Hex CFA demosaicing and uncorrected chromatic aberrations. The neural ISP recovers legible text across the full pitch range, with progressively sharper rendering at smaller pitches.

Resolution charts: On converging line patterns, higher pixel density helps resolve finer lines in both cases. However, the traditional ISP still shows moiré effects at smaller pixel sizes. The Neural ISP cleanly resolves the line patterns due to PSF aware deconvolution, with visibly finer detail preserved at 0.35 µm than at 0.75 µm.

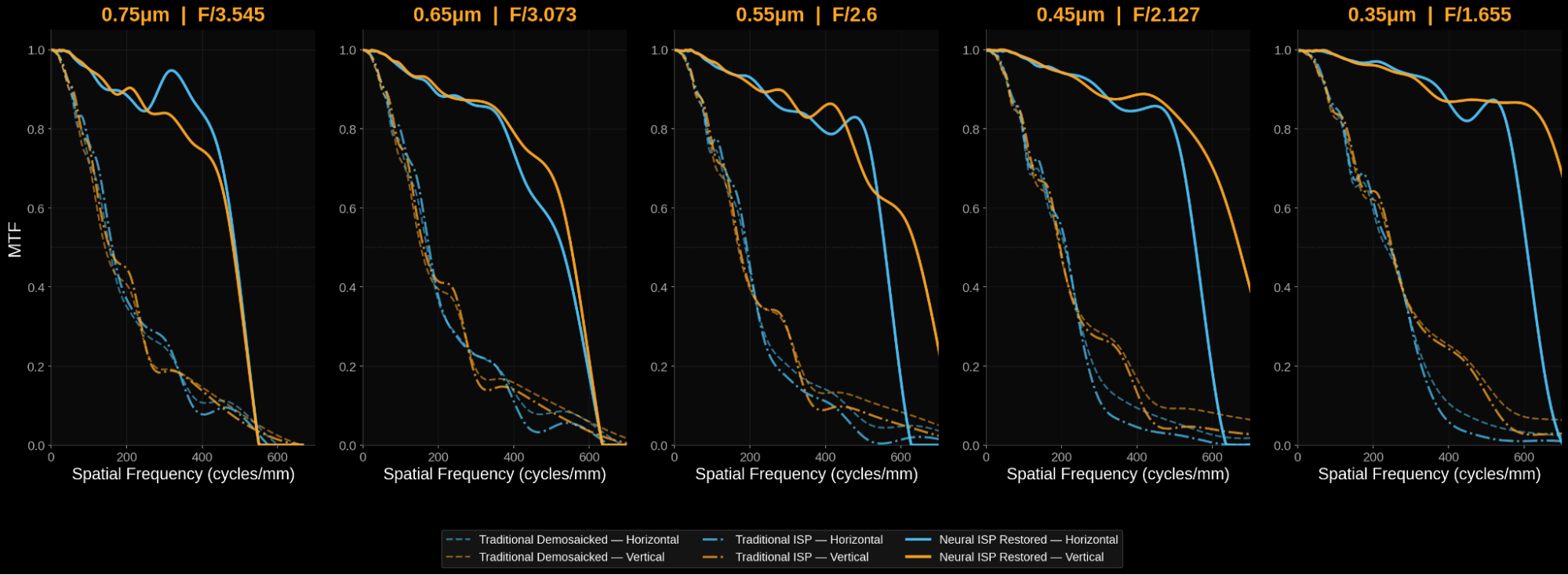

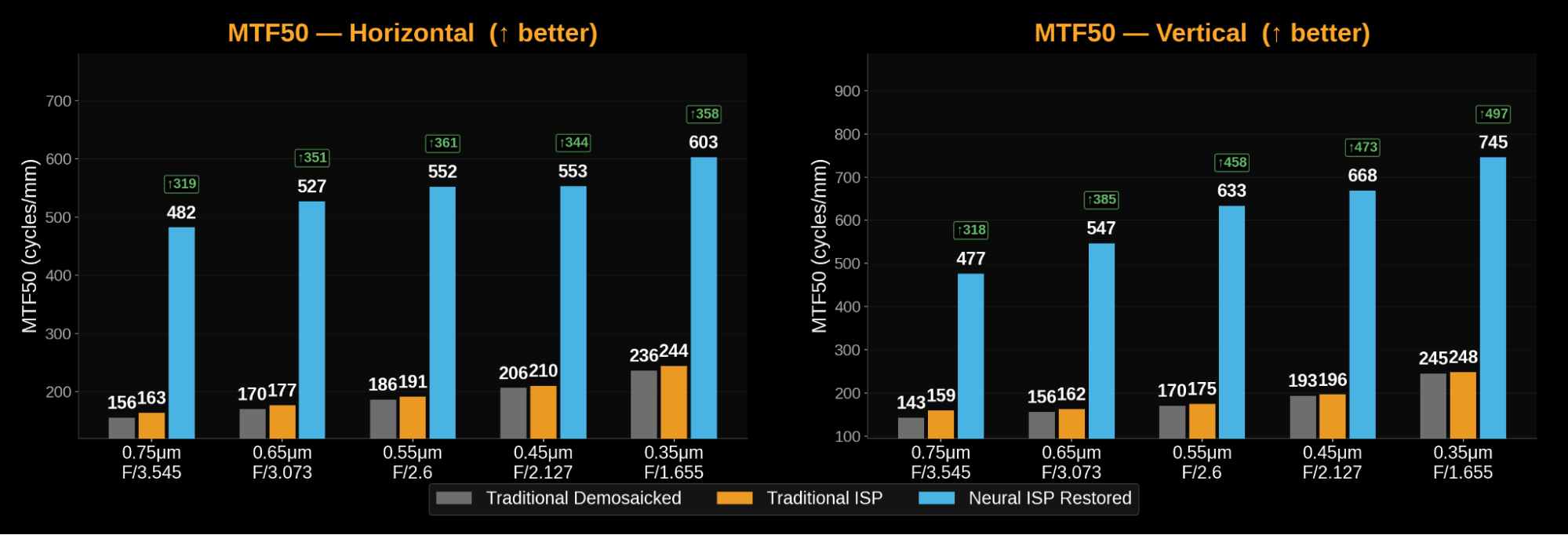

The MTF resolution analysis shows Neural ISP retains more spatial frequencies

The Modulation Transfer Function (MTF) curves tell us how the two imaging pipelines attenuate spatial frequencies and hence detail. The traditional ISP behaves similarly to the demosaiced output because the simple operations in the pipeline do not expand the spatial frequency limit. The unsharp mask (USM) subtracts low frequency content from the image to make it look sharper and doesn’t recover high frequency content. For the traditional ISP, contrast drops off rapidly at moderate spatial frequencies and gets progressively worse as we move to smaller pixels and faster apertures than the Neural ISP. For the Neural ISP, the MTF curves are elevated and maintain high contrast out to much higher frequencies — with the gap between the two methods widening at smaller pixel sizes.

MTF50 improves with both methods due to smaller pixels (because there are more pixels per mm), but the Neural ISP improves far more dramatically. The traditional ISP gains about 50% from 0.75 to 0.35 µm; the Neural ISP gains about 25% — but from a much higher baseline, resulting in an absolute MTF50 that reaches 745 cycles/mm in the vertical direction at 0.35 µm.

One observation worth noting: at the highest spatial frequencies, the neural ISP output shows a sharper rolloff than the traditional demosaic. The network is effectively trading some very high-frequency content (near the Nyquist limit) for robust mid-frequency recovery — a perceptually favorable exchange, which is also seen in the excellent anti-aliasing/moire reduction in performance in the resolution charts.

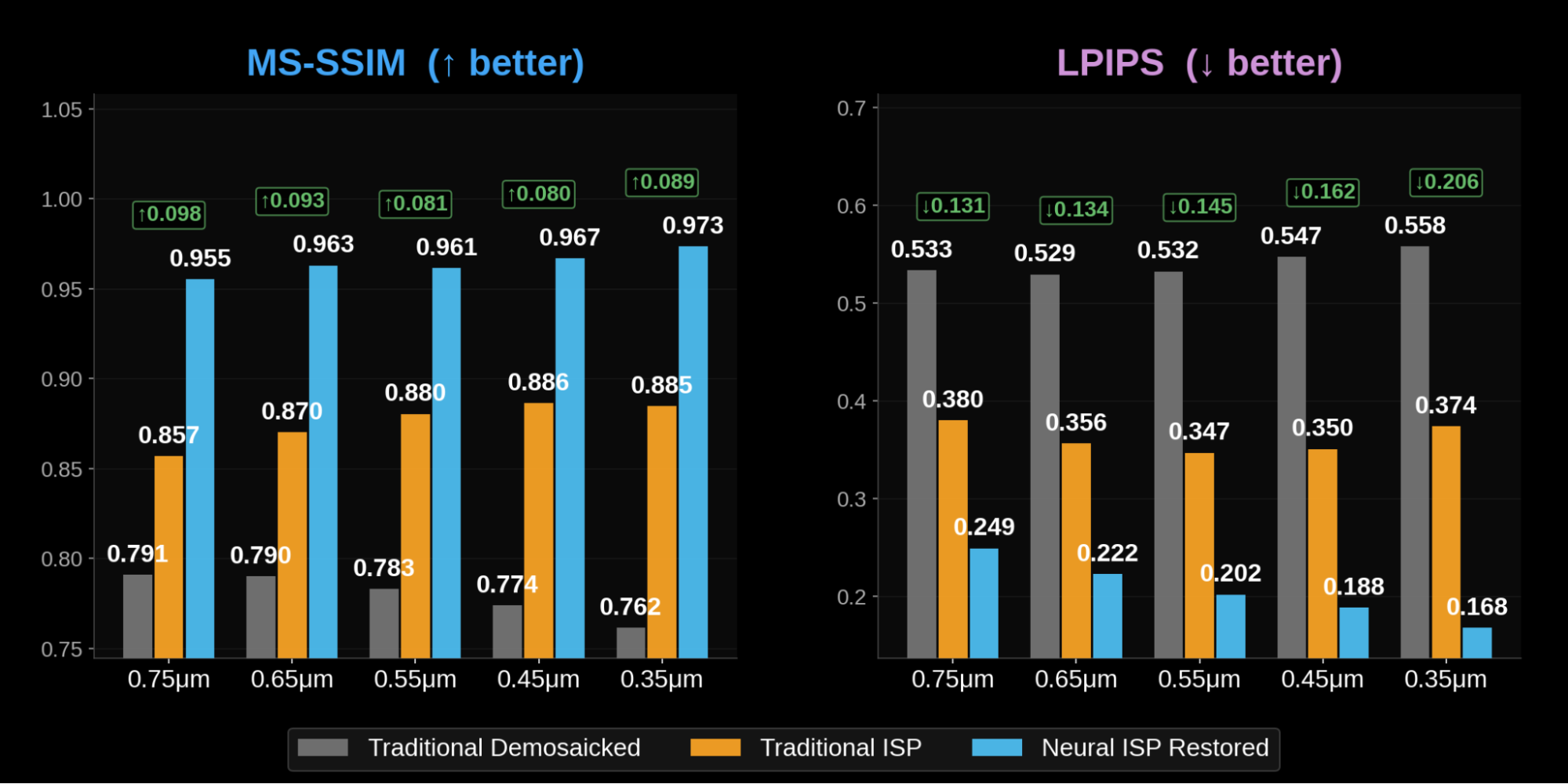

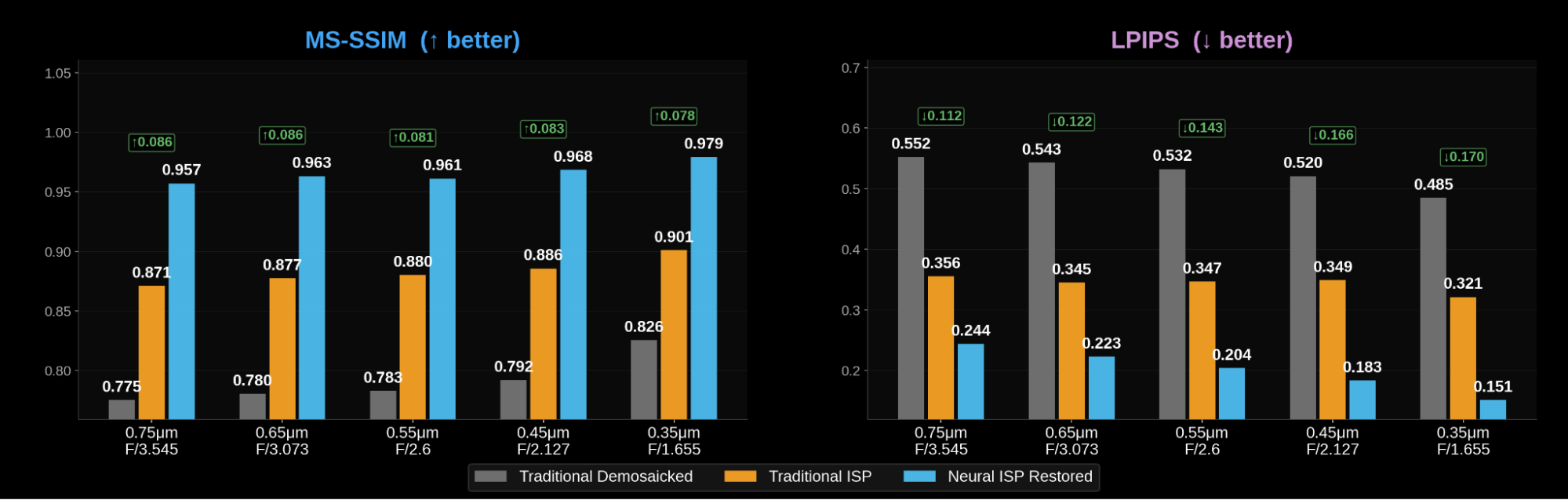

Perceptual metrics for image quality show improved performance with Neural ISP at smaller pixels

- MS-SSIM: the Neural ISP scores are very high across the board (0.957 to 0.979), and the absolute delta appears to decrease slightly at the smallest pitches. This is a known ceiling effect: MS-SSIM compresses gains as scores approach 1.0, making each incremental improvement harder to register. The traditional ISP does improve from 0.871 to 0.901, reflecting the higher sampling density.

- LPIPS: often regarded as more perceptually accurate than MS-SSIM, the LPIPS score for the Neural ISP improves from 0.244 at 0.75 µm to 0.151 at 0.35 µm, while the traditional ISP shows relatively flat perceptual quality (0.356 to 0.321). The results for traditional ISP outputs reflected the perceived observations from the natural image analysis: the increased sampling density was offset by a larger effective PSF, leading to persistent blurriness even at reduced sensor pixel sizes.

Robustness across aperture conditions

We also performed an ablation study by shrinking the pixel pitch while keeping the aperture fixed (e.g., F/2.6). In this scenario, the diffraction spot covers progressively more pixels as pitch decreases, and per-pixel SNR drops since the same total light is spread across more, smaller pixels. As expected, the traditional ISP degrades more severely than in the proportional-scaling case. This confirmed the historic 'diffraction wall' challenge for small pixels. Critically, the neural ISP remained robust and performed well, showing relative insensitivity to whether image degradation was caused by geometric aberrations (from a fast aperture) or diffraction (from a slow aperture). See Appendix for Figures.

While the neural ISP handles both effectively, proportional scaling of F/# to pitch remains the clearly preferable scenario, as it maintains per-pixel SNR, avoids the diffraction wall, and provides the network with the cleanest spatial information to maximize resolution.

Low-light performance

A common concern with small pixels is low-light performance. This is because the well capacity is reduced with pixel size and hence the obtainable shot-noise limited SNR also decreases.

We internally tested this by comparing the traditional ISP and neural ISP at approximately 15 dB SNR — a challenging scenario equivalent to dark indoor or twilight conditions, and at 35 dB SNR for relatively bright light. In our preliminary results, the neural ISP is able to denoise more effectively than the traditional method. The tradeoff we saw in the low light case is some loss of detail, and smoothing compared to the 35 dB case (yet still with better detail than the traditional ISP). This is expected behaviour as our network attempts to avoid hallucinating or generating additional features beyond what detectable signal is present in the single frame input RAW.

However, this represents a worst case scenario. In practice, both the traditional ISP and the Neural ISP would use a multi-frame fusion computational photography approach, meaning the effective SNR would be higher than the single frame 15dB case, even in relatively low light scenarios. The result would be that the Neural ISP would continue to recover finer details than the traditional approach. Stay tuned for a follow-up investigating low-light multi-frame Neural ISP performance.

Conclusion: What This Means for the Next Generation of Image Sensors

Neural ISPs open the doorway to smaller pixels

Traditionally, pixel scaling faced several challenges: smaller pixels meant higher noise and the need for better (and more expensive) optics since traditional ISPs were unable to incorporate PSF effects and correct for geometric aberrations. Our results suggest that with Neural ISP based image restoration, these challenges can be overcome.

The key result is that the denser spatial sampling provided by smaller pixels is a net positive, even when it comes with worse geometric aberrations, provided:

1. Ideally the aperture is scaled proportionally to maintain per-pixel SNR

2. A Neural ISP is used to jointly handle demosaicing, denoising, and optical aberration correction.

Why does the Neural ISP benefit disproportionately?

Due to historical mobile computational limits, a traditional ISP does not deconvolve the PSF blur kernel from the sensor capture. It operates locally and treats each step (interpolation, denoising, sharpening) independently. It has no model of the PSF and no ability to invert aberrations. When the PSF is small and well-behaved (large pixels, slow aperture), this doesn’t matter much. When aberrations are severe, the PSF blurring remains after the traditional ISP pipeline. Strengthening the traditional ISP steps (e.g. via chroma filtering) to try to mitigate the PSF blurring leads to more severe artifacts and loss of information.

A neural ISP is trained end-to-end on the specific sensor and optical characteristics and so has access to an implicit model of the PSF, the CFA pattern, the noise statistics, and their interactions. In addition to undoing the PSF blurring, it learns to invert the combined degradation in a single pass. In the small pixel era, sensors have denser sampling and hence more pixels per unit scene area for the network to take advantage of during image restoration.

Implications for future sensor design

Our results point toward a design philosophy where:

- Pixel pitch can continue to shrink below 0.5 µm with confidence that neural restoration can handle the optical consequences

- Fast apertures are essential at small pixel sizes — not just for light gathering, but to avoid the diffraction wall that makes extra pixels pointless

- The lens doesn’t need to be perfect. Rather than spending enormous design effort eliminating every last aberration for a faster F-number, the optical design can be “good enough” and lean on the neural ISP to handle the residual. We can use a wider lens aperture, without necessarily needing a more complicated design, to take advantage of the small pixels. This opens up the design space for simpler, thinner, or more aggressive optical assemblies.

- Co-design of optics and Neural ISP — where the lens is explicitly optimized to produce aberrations that the Neural ISP can efficiently correct — represents a natural next step. (This is something we’re actively pursuing with our Deep Optics program.)

The small-pixel era isn’t just a challenge — it’s an opportunity. The conventional wisdom that shrinking pixels inevitably degrades image quality assumes a conventional ISP pipeline. With a Neural ISP operating end-to-end from RAW to RGB, smaller pixels provide denser spatial sampling that the network can exploit to achieve higher resolving power, even as the optics become progressively more imperfect.

Our simulation study demonstrates this across a 2× range of pixel pitches: MTF50 increases by over 50% from 0.75 µm to 0.35 µm with neural restoration, the Neural ISP delivers consistent improvements regardless of whether the primary degradation is geometric aberration or diffraction, and these benefits extend to low-light scenarios when the aperture is scaled appropriately.

For sensor designers, module integrators, and device OEMs: the physics wall is real, but neural image processing gives us a ladder to climb over it.

-----

Glass Imaging develops Neural ISP technology for various markets including smartphone cameras, XR, drones, automotive, and professional imaging. Our Glass AI neural ISP runs on-device in real-time on Qualcomm Snapdragon platforms. For more information, visit glass-imaging.com.

Appendix

Wavefront-based PSF simulation

Since real lens prescriptions from manufacturers are proprietary, we used a Zernike polynomial wavefront that is fitted to real smartphone camera measurements as a proxy for a telephoto lens assembly. The key insight of our approach: we use the same underlying wavefront across all configurations, and simply truncate the pupil aperture to match each F-number. As the aperture widens, it captures more of the wavefront’s outer regions — where aberrations are strongest. This mirrors what happens physically when a real lens is operated at a faster F-number.

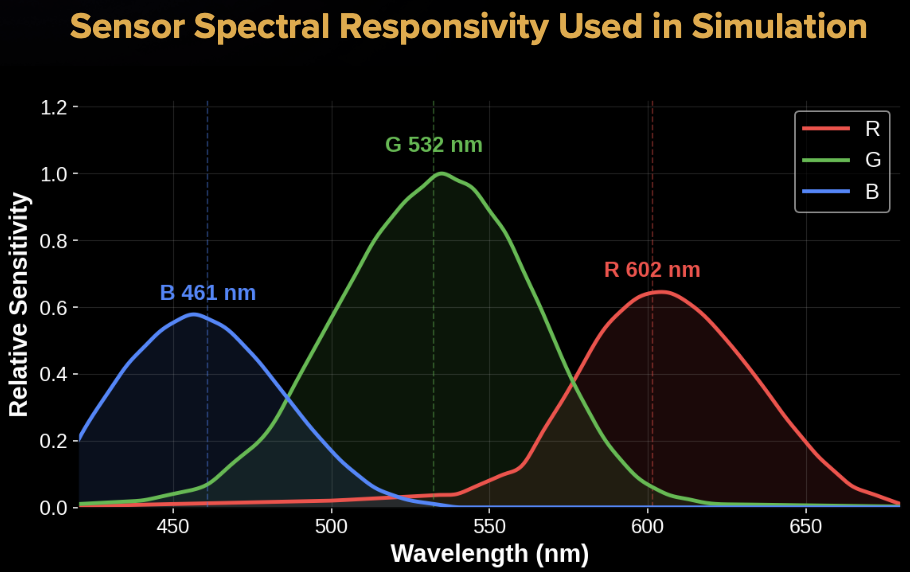

We simulated the full exit pupil wavefront across 50 wavelengths spanning 420 to 680 nm, then computed PSFs using a realistic sensor spectral sensitivity curve (see Figure 13) to generate an incoherent polychromatic PSF. The Zernike coefficients were manually tuned to approximate the aberration profile of a modern folded telephoto module, including controlled levels of spherical aberration, astigmatism, and higher order terms.

SNR normalization, sensor and lens size

An important property of proportional scaling: the increase in aperture area exactly compensates for the decrease in pixel area, so photon flux per pixel remains constant across all configurations. Hence so does the signal to noise ratio (SNR), which we assume is shot noise dominated. This means any differences in output quality are not due to noise advantages.

With a fixed sensor size, smaller pixels simply increase the megapixel count while maintaining the same field of view. Moving from 0.75 µm to 0.35 µm more than quadruples the pixel count — and therefore the spatial sampling density of the scene. This also implies our simulation does not change the focal length or z-height (total track length, or TTL) of the lens, meaning designs remain physically plausible without modification of the smartphone’s industrial design.

One tendency in recent years has been for the TTL to increase in order to support longer focal lengths to improve zoom or more lens elements to improve image quality. But this trend is difficult to sustain as camera modules take up more physical space inside the phone. Though innovations such as periscope lenses and tetraprisms (as well as larger camera bumps) have enabled longer optical paths, they still take up real estate inside the device. Our simulations show that simply by increasing the aperture size on an existing design we can make the most of smaller pixels.

Modeling of 2×2 OCL microlens effect

Modern high-resolution sensors use on-chip lenses (OCLs) shared across a 2×2 pixel group. Because each pixel beneath the microlens receives light from a slightly different angular distribution, the chief ray angle (CRA) varies appreciably across the four pixels, ultimately yielding four distinct PSFs within each microlens unit. To model this microlens effect, we introduced small global tilt and coma offsets across the four phase positions, thereby simulating a “pixel-imbalance effect” that typically appears as strong edge fringing in mosaic raw images and remains as a well-known challenge in Quad and Hex CFA demosaicing.

Raw Image Synthesis

After all the optics, we simulate the effects of the sensor. This includes mosaicking through a Hex Bayer CFA pattern and corrupting with sensor noise (additive Gaussian) at approximately 35 (or 15) dB. Note that we accounted for the CFA pattern transmittance by using the spectral responsivity in the PSF generation step.

Assumptions and simplifications

We made some assumptions to simplify the simulation including:

- Pixel-level wave optics: diffraction at the pixel aperture, optical crosstalk between adjacent pixels are not modeled as these effects require detailed knowledge of the sensor’s physical structure.

- Quantum efficiency is assumed to be preserved across pixel sizes due to advances in sensor technology and the underlying absorptive material remaining the same.

- The wavefront is not re-optimized per configuration. A real lens designer would adjust the optical design when targeting a faster F-number. Our approach deliberately holds the aberration profile constant to isolate the effect of aperture scaling — making it a somewhat pessimistic scenario for smaller pixels. This puts a higher burden on the Neural ISP for deblurring.

Evaluation Metrics

We evaluate both the traditional demosaiced output and the Neural ISP restored output using:

- MTF (Modulation Transfer Function) curves — measured by feeding simulated sinusoidal grating images at progressively finer spatial frequencies through the full imaging simulation and Neural ISP pipeline and comparing input-to-output contrast at different frequencies via FFT. This curve lets us know how different spatial frequencies are attenuated through the pipeline.

- MTF50 — the spatial frequency at which the system MTF drops to 50%, reported in cycles/mm. Higher values indicate better spatial resolution.

- MS-SSIM — multi-scale structural similarity measures how similar the output image is to the ground truth. Higher is better and averaged over approximately 160 test images.

- LPIPS — learned perceptual image patch similarity is another metric to measure image quality of the output against the ground truth. Lower is better and averaged over the same test set.

A critical detail: all metrics are reported in cycles/mm (physical units), not cycles/pixel. This correctly accounts for the higher sampling density of smaller pixels and avoids penalizing them for having more pixels per unit scene area.

Holding the Aperture Size Fixed:

We also tested the case where the pixel size decreased but the aperture size stayed constant at F/2.6. The results are shown below in Figures 14 and 15: